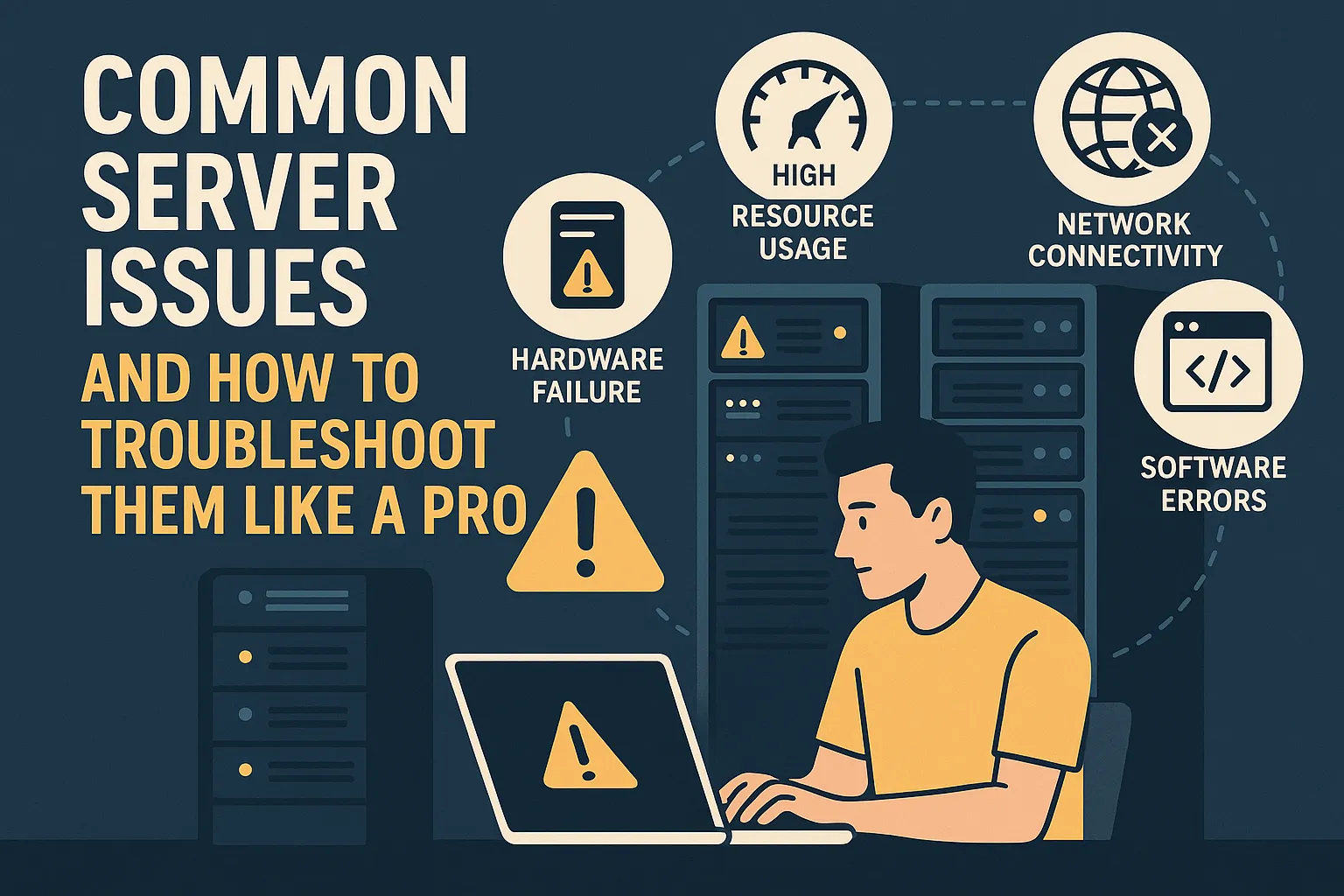

Common Server Issues and How to Troubleshoot Them Like a Pro

Server troubleshooting is an essential skill for every IT professional and system administrator. When servers fail, businesses lose revenue, customers face disruptions, and your reputation takes a hit. This comprehensive guide will teach you how to identify, diagnose, and resolve the most common server problems efficiently, ensuring minimal downtime and maximum performance.

Whether you’re managing web servers, database servers, or application infrastructure, understanding proper troubleshooting techniques can save you hours of frustration and keep your systems running smoothly.

Understanding Server Troubleshooting Fundamentals

Before diving into specific problems, it’s important to understand what server troubleshooting actually involves. At its core, troubleshooting is a systematic process of identifying and resolving issues that affect server performance, availability, or functionality. A server is essentially a powerful computer that provides services, data, or resources to other computers over a network.

Successful troubleshooting requires a methodical approach: gather information, analyze data, form hypotheses, test solutions, and document your findings. This structured methodology helps you solve problems faster and prevents recurring issues.

High CPU Usage: Diagnosing Performance Bottlenecks

One of the most frequent complaints involves sluggish performance caused by excessive CPU consumption. When your processor maxes out, applications slow to a crawl, users experience delays, and the entire system becomes unstable.

How to Identify CPU Problems

Start by checking your CPU usage through monitoring tools. On Linux systems, use the command to see real-time processor statistics. Windows administrators can rely on Task Manager or Performance Monitor. Look for processes consuming unusually high percentages of CPU resources.

Pay attention to both instantaneous spikes and sustained high usage. Brief spikes might be normal during scheduled tasks or backups, but consistent high usage indicates a deeper problem requiring investigation.

Practical Solutions for CPU Issues

First, identify which applications or services are monopolizing your processor. Sometimes a single runaway process causes the bottleneck. Once identified, determine whether the process is legitimate or potentially malicious. Legitimate processes might include database queries, backup operations, or scheduled tasks that coincidentally run during peak hours.

Consider restarting the problematic service if it appears stuck in an infinite loop. For recurring issues, investigate whether the application needs optimization, additional resources, or configuration adjustments. Memory leaks, inefficient code, or poorly designed database queries often cause sustained high CPU usage.

Working with your development team to optimize these elements provides long-term solutions. Sometimes upgrading to faster processors or adding more CPU cores becomes necessary when you’ve exhausted software optimization options.

Memory Exhaustion: Preventing System Crashes

Memory issues manifest differently than CPU problems but prove equally disruptive. When a server runs out of available RAM, it starts using swap space on the hard drive, drastically reducing performance. Eventually, the system may crash or become completely unresponsive.

Recognizing Memory Problems

Watch for warning signs like increasing swap usage, applications crashing unexpectedly, or the dreaded “out of memory” errors in system logs. Linux users can run the command to check memory availability, while Windows administrators should examine the Memory section in Performance Monitor.

Monitor your memory usage trends over time. Gradual increases suggest memory leaks, while sudden spikes might indicate legitimate workload increases or configuration problems.

Effective Memory Management Solutions

Begin by identifying which processes consume the most memory. Memory leaks, where applications gradually claim more RAM without releasing it, represent a common culprit. Applications with memory leaks show steadily increasing memory consumption over time, even when workload remains constant.

Short-term fixes include restarting the memory-hungry application to reclaim resources. However, sustainable solutions require addressing the root cause. This might involve updating software to patched versions, adjusting application configurations to use memory more efficiently, or upgrading your server hardware to accommodate legitimate memory requirements.

Implement proper monitoring and alerting for memory usage. Set thresholds that notify you when memory consumption reaches concerning levels, giving you time to investigate before problems escalate into emergencies.

Network Connectivity: Resolving Access Problems

Network issues prevent users from accessing your server entirely, making them critical emergencies requiring immediate attention. These problems stem from various sources including misconfigured firewalls, DNS failures, routing issues, or physical network hardware problems.

Diagnosing Network Issues

Start with basic connectivity tests. The ping command checks whether your server responds to network requests. If ping fails, the problem might be network-related rather than application-specific. Use traceroute or tracert to identify where packets stop traveling along their path to your server.

Check DNS resolution using nslookup or dig commands. Many apparent server outages actually result from DNS problems where domain names fail to resolve to correct IP addresses. Verify that your DNS records point to the right locations and that DNS servers respond properly.

Resolution Strategies for Network Problems

For firewall issues, review your rules to ensure legitimate traffic can reach necessary ports. Web servers typically need port 80 for HTTP and port 443 for HTTPS open. SSH usually requires port 22, while database servers use their specific ports like 3306 for MySQL or 5432 for PostgreSQL.

Examine your server logs for connection attempts and rejections. These logs reveal whether traffic reaches your server but gets blocked by security rules. Sometimes recent configuration changes inadvertently block legitimate traffic, so reviewing recent modifications helps identify the problem.

Test connectivity from multiple locations to determine if the issue affects all users or only specific networks. This information helps narrow down whether the problem lies with your server, your network infrastructure, or external routing issues.

Disk Space Exhaustion: Managing Storage Effectively

Running out of disk space causes numerous problems from failed backups to crashed applications and corrupted databases. Modern servers often fill up faster than expected due to log files, temporary files, and unexpected data growth.

Finding Space Hogs

Use disk usage commands to locate what consumes your storage. On Linux, shows overall disk usage by partition, while reveals which directories contain the most data. Windows users can rely on Disk Cleanup utilities or third-party tools like WinDirStat for visual representations of space usage.

Don’t wait until you’re completely out of space. Start investigating when disk usage reaches 80% to give yourself time to implement solutions before problems occur.

Clearing Space Safely

Log files frequently cause space issues. Application and system logs grow continuously, sometimes filling drives within days if not properly managed. Implement log rotation policies that automatically archive or delete old logs. Most logging systems include built-in rotation capabilities that compress older logs and delete ancient entries.

Temporary files accumulate in designated temp directories. Clearing these periodically frees substantial space. However, exercise caution with cleaning operations since some temporary files might be actively used by running applications. Consider establishing automated cleanup scripts that run during low-traffic periods.

For persistent space shortages, evaluate whether you need additional storage capacity. Sometimes the solution involves expanding disk allocations, especially for databases and file storage systems experiencing legitimate growth. Cloud environments make adding storage relatively simple, while physical servers might require hardware upgrades.

Database Performance: Optimizing Query Speed

Slow database queries cripple application performance since most modern applications depend heavily on database operations. Users experience timeouts, slow page loads, and general frustration when databases underperform.

Identifying Database Bottlenecks

Enable slow query logging to capture queries taking excessive time to execute. Most database systems include built-in profiling tools showing query execution times, resource consumption, and performance statistics. MySQL users can examine the slow query log, while PostgreSQL administrators should review pg_stat_statements.

Monitor key database metrics like query throughput, connection counts, and cache hit ratios. These metrics help you understand normal database behavior and recognize when performance degrades.

Optimization Approaches

Missing indexes often cause slow queries. Indexes function like book indexes, helping databases locate information quickly without scanning entire tables. Analyze your slow queries to determine whether adding indexes would improve performance. However, avoid over-indexing since indexes consume space and slow down write operations.

Query optimization involves rewriting inefficient queries to reduce resource consumption. Sometimes poorly written queries scan entire tables when they could use targeted lookups. Work with developers to refactor problematic queries, using EXPLAIN plans to understand how databases execute queries.

Regular database maintenance tasks like vacuuming, optimizing tables, and updating statistics help maintain performance. Schedule these during low-traffic windows to minimize user impact. Many databases can perform these tasks automatically if configured properly.

Consider implementing caching layers to reduce database load. Technologies like Redis or Memcached store frequently accessed data in memory, dramatically reducing database queries for common operations.

SSL Certificate Management: Avoiding Security Warnings

Expired SSL certificates immediately break secure connections, displaying scary warning messages to users. These issues are entirely preventable through proper monitoring but surprisingly common in practice.

Preventing Certificate Emergencies

Implement monitoring that alerts you weeks before certificate expiration. Many certificate authorities provide email reminders, but having multiple alert mechanisms ensures nothing slips through. Some organizations use automated certificate management systems like Let’s Encrypt with automatic renewal.

Maintain an inventory of all certificates used across your infrastructure. This inventory should include expiration dates, responsible parties, and renewal procedures. Regular audits of this inventory prevent surprises.

Certificate Renewal Process

Renewing certificates involves generating new certificate signing requests, obtaining updated certificates from your certificate authority, and installing them on your server. Test the new certificate in a staging environment before deploying to production when possible.

After installation, verify the certificate works correctly using online SSL testing tools. These tools check certificate validity, proper chain configuration, and potential security vulnerabilities. Popular tools include SSL Labs’ SSL Server Test, which provides comprehensive analysis and grades your configuration.

Proactive Monitoring and Prevention

Professional system administrators emphasize prevention over reactive problem-solving. Implementing comprehensive monitoring catches problems before they impact users, reducing emergency situations significantly.

Establishing Performance Baselines

Establish baseline metrics for normal server performance. Understanding typical CPU usage, memory consumption, network traffic, and disk I/O patterns helps you recognize anomalies quickly. When metrics deviate significantly from baselines, investigate immediately rather than waiting for users to report problems.

Document your baselines and review them periodically. As your infrastructure grows and usage patterns change, baselines should evolve accordingly. What’s normal today might be concerning tomorrow if your traffic doubles.

Implementing Effective Alerting

Set up alerting thresholds that notify you of potential problems. Configure alerts for critical metrics like disk space dropping below fifteen percent, CPU usage exceeding eighty percent for sustained periods, or memory utilization approaching maximum capacity.

Avoid alert fatigue by tuning thresholds appropriately. Too many false alarms train people to ignore alerts, defeating their purpose. Balance sensitivity with specificity to ensure alerts indicate real problems requiring attention.

Regular Maintenance Schedules

Regular maintenance prevents many issues before they become emergencies. Apply security patches promptly to protect against vulnerabilities. Update software components to benefit from bug fixes and performance improvements. Review system logs periodically to catch warning signs of developing problems.

Create maintenance windows where you can perform updates and potentially disruptive tasks. Communicate these windows to users so they understand when service interruptions might occur. Use maintenance windows to test backup restoration procedures, validate monitoring systems, and perform other preventive activities.

Building Your Troubleshooting Toolkit

Effective troubleshooting requires proper tools and resources. Invest in monitoring software that provides visibility into system performance. Consider both commercial solutions and open-source alternatives depending on your budget and requirements.

Build a knowledge base documenting common problems and solutions. When you resolve an issue, document the symptoms, investigation steps, root cause, and solution. This knowledge base becomes invaluable for training new team members and solving recurring problems faster.

Develop troubleshooting checklists for common scenarios. These checklists ensure you don’t overlook obvious possibilities when working under pressure. Include basic steps like checking recent changes, reviewing logs, and verifying connectivity before diving into complex investigations.

Conclusion: Mastering the Art of Troubleshooting

Server troubleshooting combines technical knowledge, systematic thinking, and practical experience. By following the methodologies outlined in this guide, you can resolve issues more quickly and minimize downtime. Remember that every problem you solve adds to your expertise, making future troubleshooting faster and more effective.

Focus on building strong fundamentals in monitoring, logging, and analysis. These skills transfer across different technologies and platforms, making you a more versatile administrator. Stay curious, keep learning, and don’t hesitate to seek help from colleagues or community resources when facing unfamiliar problems.

Professional server management balances reactive troubleshooting with proactive monitoring and maintenance. Invest time in preventive measures to reduce emergency situations. When problems do arise, having practiced troubleshooting procedures ensures quick resolution with minimal disruption to your users and business operations.

No comment